Introduction

Broadcast subtitles that can be switched on and off are a well-established service for TV programs[1]. With the digitalization of broadcast workflows and the increasing use of the Internet as new distribution channel for video content, the production, transmission, and presentation of broadcast subtitles faces similar challenges as the media components audio and video. But while solutions for audio and video become more and more stable, the change process for broadcast subtitle workflows is still at its beginning.

One of the biggest challenges is the replacement of legacy subtitle file and transmission formats by new means that are expressive enough for a digital and hybrid broadcast scenario. The possibly most popular new subtitle format is the Timed Text Markup Language (TTML) [TTML1] as specified by the W3C. As a vocabulary toolkit, TTML can meet the requirements of both production and distribution. It is used as information container for authoring, exchange, and also presentation. Focusing on the XML specifics, the paper will analyze how TTML is used at different stages of the broadcast subtitle workflow. It will look at current problems, successful integration as well as new opportunities. It will identify the missing bits in a TTML processing chain and ask whether the TV and video media sector could be an important new ecosystem for the development of the XML language.

The analysis builds on results of the HBB4ALL project [HBB4ALL]. HBB4ALL is a European project, co-founded by the European Commission and by 12 partners from complementary fields: universities, TV broadcasters, research institutes and small and medium-sized enterprises (SME)[2]. The goal of HBB4ALL is to investigate the opportunities and challenges for the access services on Smart TVs and other Internet-connected devices. For subtitles as media access service, one main goal of HBB4ALL was to bring the TTML-based EBU-TT-D format [EBU3380] to operational broadcast workflows.

TTML – Basic Structure

To better understand the challenges of integrating TTML into broadcast workflows, a short TTML overview is given below.

The minimum of information subtitles need to carry is timing and the subtitle content.

Timing is set with begin and end attributes on content

elements such as the p element [3].

<p begin="0s" end="2s">Hello World</p>

Other main parts that need to be controlled are size, position and styling of the subtitle content.

Size and position of a subtitle block are set through the definition of a rectangular area called a region. This area can then be referenced through an ID/IDREF construct by content elements.

The size is defined by using the extent attribute and the position by

marking the x,y position of the top left vertex of the region using the

origin attribute. Unless not set otherwise by the “external context”,

percentage values for origin and extent refer to the related

video.

<region xml:id="r1" tts:origin="0% 0%" tts:extent="80% 20%"/>

TTML has a variety of style properties that can be set on content. Most of them are

derived from XSL-FO and CSS (e.g. color, background-color,

font-size, and font-family).

These style attributes can be set on content elements directly or on

style elements that can be referenced.

<style xml:id="s1" tts:color="yellow"

tts:background-color="rgba(255, 225,0,188)"/>

<p style="s1" begin="0s" end="2s">Hello world</p>

TTML also allows extension with data in user-defined namespaces.

<p begin="0s" end="2s" xmlns:foo="www.foo.com"

foo:status="not approved">

<tt:metadata>

<foo:comment>Needs revision</foo:comment>

</tt:metadata>Hello world

</p> TTML for Authoring Subtitles

The human author of subtitles is in general non-technical and does not care about the underlying format. On the contrary: he or she does not want to be bothered with low-level file specifics. The human readability of the XML file format often does not help, because it gives the impression as if the reader should be able to understand the underlying semantics, while the main audiences of this type of formats are most often software systems. Therefore, the reaction to a new XML format like TTML is often not overwhelmingly positive.

Subtitlers rely rather more on an intuitive interface of the editing software that allows them to produce subtitles as fast as possible. This is especially important for subtitling of live events where authoring speed is crucial and no editorial scripts exists as a text base. In the time-optimized authoring process, new extensible features of an XML-based format also mean potentially more work for the author, and in most situations manual authoring of the XML source is not an option.

For about forty years, manufactures have refined professional subtitle preparation systems to meet the requirements of subtitle authors. In view of this history, TTML is a relatively new format, and most of the systems still use manufacturer-specific or standardized binary file formats to store the subtitle information. Although these binary formats may also contain text, they use byte codes for formatting and other control information.

More obvious is the advantage on an XML formats for systems that produce subtitles automatically. The automatic generation of subtitles with speech-to-text technologies is one approach to increase the coverage of subtitles for the deaf and hard of hearing. The automatic translation of subtitles between different languages is another strategy to automate the authoring process. In contrast to the established subtitle editor software, these systems have more recent origins. They can make use of the latest technology, like TTML, without considering an existing code base. Providers and implementers of automatic subtitle production systems (who often are not at home in the subtitle domain) can make use of the advantage that TTML is more accessible than legacy binary formats that may also depend on undocumented practices. In addition XML, as an interchange format is very important for these type of systems because they have a stronger dependency on information exchange with third-party systems from other manufacturers.

Status Quo

Most professional subtitle preparation systems support TTML as an export format. For a long time, this was restricted to a W3C Candidate Recommendation of TTML. This version is also known under the acronym DFXP or TTAF [DFXP]. Since a few years ago, more exports of TTML profiles that are derived from the stable version of TTML are supported. This includes open-standard specifications like EBU-TT [EBU3350][EBU3380] and company-specific profiles.

Compared to the export feature, TTML import features are less common.

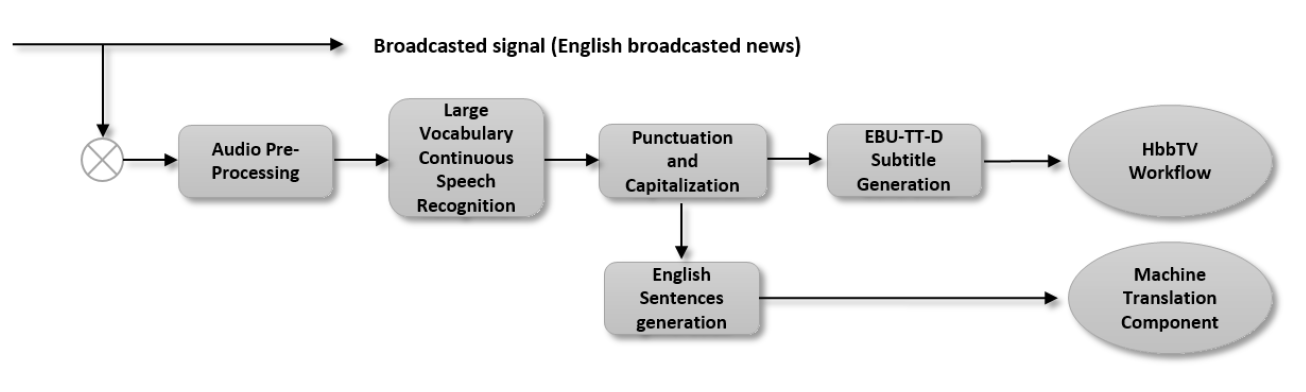

The native formats of preparation systems to store authored subtitle content remain standard or vendor-specific binary subtitle formats. Systems that automatically create subtitles already use TTML as a native format. One example is the Automatic Subtitling Component System implemented by the Spanish research center Vicomtech-IK4 in the HBB4ALL project. After the speech recognition and language processing, the result is saved as EBU-TT-D XML document. [4]

Challenges

During the HBB4ALL project, some preparation systems have been investigated with

respect to their TTML support. As a positive result, it turns out that the minimum

requirement to export well-formed XML is always met. On the other hand, it became

obvious that in the first implementation stages, the TTML export is rarely within

the specifications. Typical basic errors are the use of the attribute

id in no namespace instead of xml:id, or the use of a

number as first value for an xml:id attribute.

The investigation has shown that, although XML schemas exist, they are often not used until software providers are pointed to the schema and basic support in the use of XML schema validation is given. After a TTML XML schema is used in the implementation process, most of specification errors disappear.

Also, the correct use of XML namespaces seems uncommon. While export results are mostly correctly namespaced, systems with import features have difficulties doing it the right way. In one case, TTML documents are accepted on import even though all elements are in “no namespace”, while TTML documents that do not use the same prefixes as in the TTML specifications are rejected. While the first issue can be seen as fault-tolerant design, the latter issue points to an incomplete implementation of XML namespaces.

Another issue is the expressive power of TTML. At first sight, this seems (and also is) a strength. The use of Unicode alone, which lifts the restriction on certain character code tables, is invaluable. But especially the possibility to express the same thing in a lot of different ways is often a showstopper for complete implementation and interoperability between the encoder and the decoder side. Below, five ways are shown how the color red can be expressed:

<p tts:color="red">Attention!</p> <p tts:color="FF0000">Attention!</p> <p tts:color="FF0000FF">Attention!</p> <p tts:color="rgb(255,0,0)">Attention!</p> <p tts:color="rgba(255, 0, 0, 255)">Attention!</p>

Although the final responsibility lies with the authors of the specification, it is, paradoxically, the ease with which syntactic and semantic structures can be added in XML that often leads to a specification so large that it will never be fully implemented.

Opportunities

Until now, TTML has not played out its potential as a native format for manual subtitle creation.

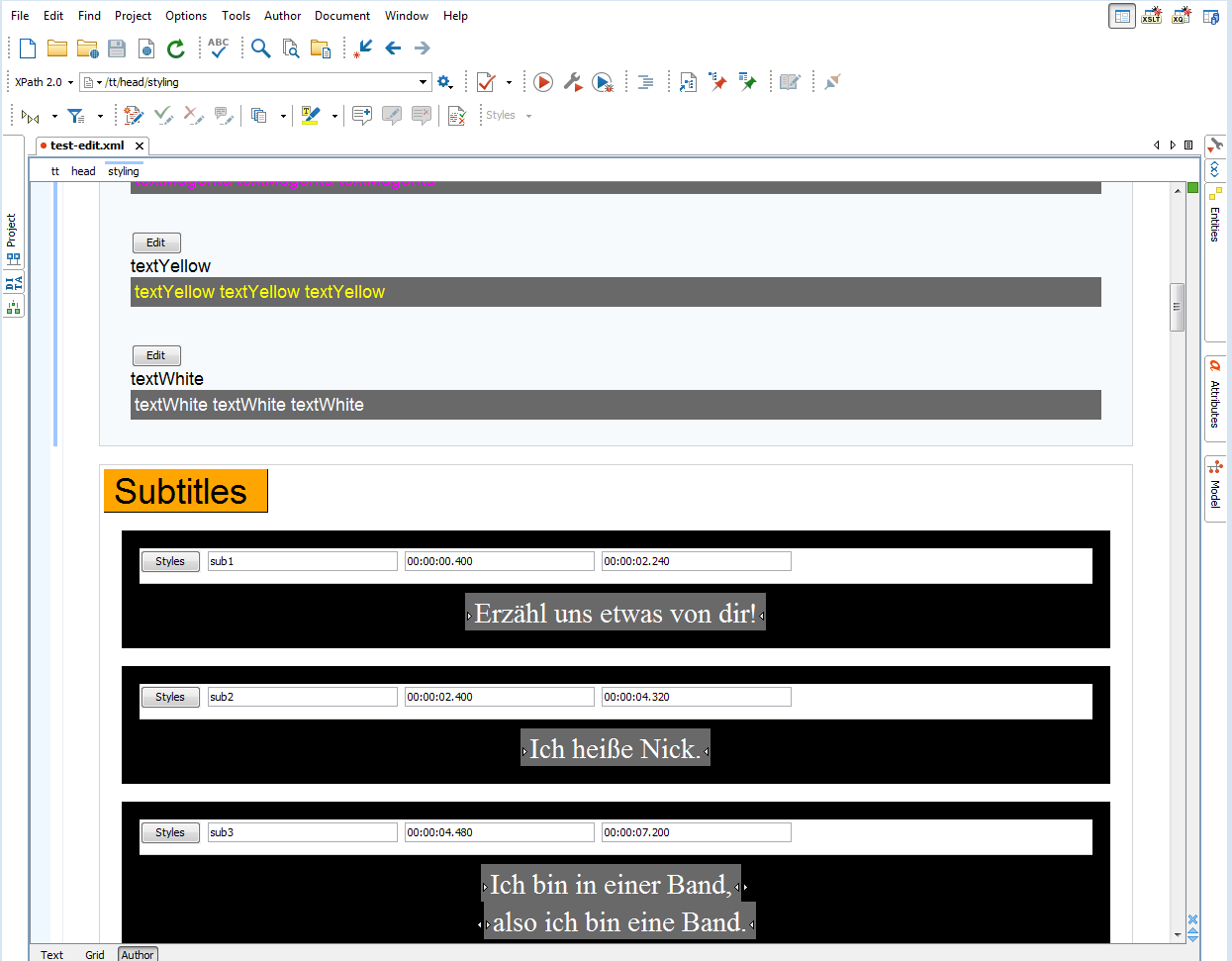

One solution approach to generate What-You-See-Is-What-You-Get (WYSIWIG) user interfaces is provided by the XML editor Oxygen [OxygenEBU]. It uses a combination of XML Schema with CSS styling. In the context of the HBB4ALL project, a contact to the manufacturer of Oxygen (Syncro Soft) was established, and Syncro Soft provided a first prototype module for native editing of the EBU-TT TTML profile. One advantage of this approach is that through the integration of XML Schema, input is already validated during the authoring process. The challenge is to reach a level of usability that subtitlers know from established subtitle preparation systems.

TTML in Archiving and Content Management

In broadcast workflows, subtitles need to be managed like related video material. In broadcast operation media asset management (MAM) systems, and for long-term storage, broadcast archive systems are used.

Processing of text based formats is a fundamental feature of both kind of systems and XML is a very common format for a variety of import- and export APIs.

Status Quo

Some MAM and archive systems already support the management of TTML files, but the main asset type for subtitles is still a binary subtitle format. The TTML support is mostly limited to a TTML document type that can just be used for Web distribution, and, although TTML has the full capability to capture it, important information that is needed for the subtitle broadcast workflows is missing in these XML documents.

Challenges

Because MAM and archive systems already have XML intelligence, the processing of XML subtitles should not be a big challenge. The main task is therefore to identify the important information elements that are useful for systems of those types. Timing and metadata information may have, for example, a much higher priority than styling and layout information.

Opportunities

Subtitles in an XML format like TTML have a huge potential for MAM and archive systems. The text-based format gives immediate data access. Because information is time-encoded, TTML can help to discover relevant parts in video content. TTML can also be used to implement preview features. If TTML is not supported directly by Web players, timecode and subtitle text can easily be translated with XSLT to simple text-based subtitle formats like SubRip (SRT) or the Web Video Text Tracks Format [WebVTT]. These formats are natively supported in HTML environments.

TTML in Contribution and Exchange

Because the same video content is distributed over a growing variety of distribution channels, and because new players – like web streaming providers – are changing the market, the exchange of video and subtitle content is getting more and more important.

Since the 1990s, the binary-based EBU-STL [EBU3264] is a well-established file format for subtitle exchange in Europe. But with the introduction of High Definition Television (HDTV) and the increasing Internet distribution channel, the teletext-based STL format no longer meets current and future requirements. The EBU has therefore specified the TTML profile EBU-TT as a successor format of EBU-STL.

Status Quo

In European broadcast operation, the contribution and exchange of subtitles for the playout of linear TV programs is still mainly based on EBU-STL.

But for the exchange of subtitles between broadcast operation and Web distribution, TTML is very common. One reason is that teams and organizations that have a focus on Web technology clearly prefer an XML format as opposed to a binary format. Even if TTML is not used as Web player format, it is a good base for the conversion to other text-based subtitle formats.

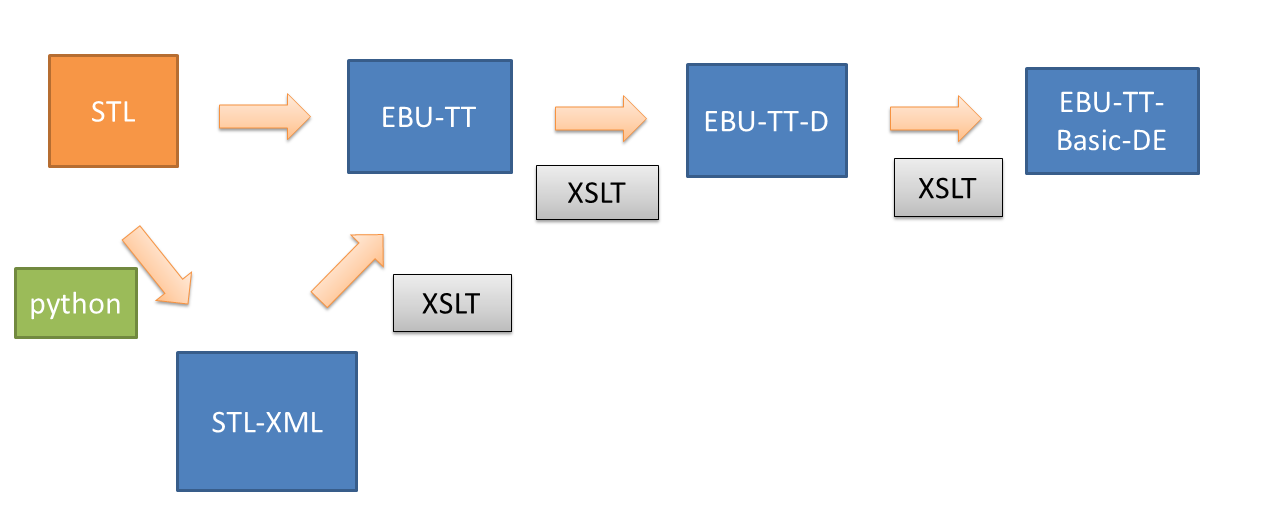

To facilitate the generation of TTML on the basis of the broadcast exchange format EBU-STL, the HBB4ALL project provides the subtitle conversion framework (SCF) [SCF].

The framework mainly consists of a python script and several XSLT scripts to translate from EBU-STL into an XML representation of the binary STL format and from there into other TTML-based XML formats like EBU-TT.

As the exchange of subtitle files is typically a sender–receiver scenario where both sides have agreed on a content model, an XML schema is often used to validate TTML subtitle documents against this model. This has a clear advantage over the broadcast subtitle exchange format EBU-STL, where no vendor-independent file validation is possible.

Challenges

The mapping between EBU-STL and TTML plays a crucial part in a migration phase where EBU-STL may be replaced by TTML. The challenge is that the information structure of the teletext-based STL is very different from the TTML XML markup. One main difference is that teletext works with control codes that are all on the same hierarchy level. Another challenging difference is the use of spaces. Because teletext assumes a monospace text grid, spaces are often used to push subtitles to the desired horizontal position.

A typical teletext subtitle sequence in STL (written down in its XML representation) looks as follows:

<AlphaBlack/><NewBackground/><AlphaWhite/> <StartBox/>White<space/>on<space/>Black<EndBox/> <NewLine/> <AlphaBlue/><NewBackground/><AlphaWhite/> <StartBox/>White<space/>on<space/>Blue<EndBox/>

This sequence could be mapped to TTML as shown below.

<style xml:id="s1" tts:color="white"

tts:backgroundColor="black"/>

<style xml:id="s2" tts:color="white"

tts:backgroundColor="blue"/>

<span style="s1">White On Black</span>

<br/>

<span style="s2">White On Blue</span>The first experience with transformations from legacy formats into TTML indicates that a change in subtitle design thinking is needed. It also has to be accepted that lossless round-tripping between legacy formats and TTML may not be possible without the use of source data tunneling.

Opportunities

By using XML technologies like XML Schema, Schematron, and XSLT, the subtitle conversion framework was able to demonstrate the advantages of TTML as an XML format. Especially the transformation between different TTML profiles with XSLT was possible at low implementation cost.

A module of the subtitling conversion framework (which has not been published yet) goes one step further. It implements a transformation from the XML representation of STL back into the binary STL form using XML technologies. This module was realized by the company BaseX GmbH using XQuery and the EXPath Binary and File Module[5]. This type of implementation is an important bridge between the binary and XML world and possibly a pre-condition for the long-term replacement of EBU-STL. Even if TTML will be used as the main subtitle exchange profile in the near future, there will still remain systems in the workflow that only understand a legacy format. Therefore it must be possible to generate legacy subtitle formats like EBU-STL from TTML XML.

TTML as Embedded Data in Transmission

At a certain point in the production workflow, subtitle information structures are not maintained separately anymore but are embedded into the video data. This often happens at playout by insertion of subtitle data into the uncompressed video data stream. The technology used is the Serial Digital Interface (SDI). For High Definition Video, subtitle data is inserted in the vertical ancillary data part (VANC) of the HD-SDI signal.

Status Quo

Until now, there is no defined way to embed TTML in the VANC of the SDI Signal. Subtitle Data is therefore only embedded in legacy formats like teletext.[6]

Challenges

VANC data in SDI is inserted into the non-picture regions of the video frame. The User Data can be put into data packets which are each limited to 255 bytes. Because a typical TTML document exceeds this limit, the documents have to be split into several data packets. Together with this “chunking” mechanism, it needs also to be defined how one TTML document can be associated with a sequence of frames. Although, theoretically, TTML XML data could be inserted for each frame in the continuous data stream this would be a waste of data space and also difficult to decode.

Opportunities

The embedding of TTML in uncompressed video streams is less an option than a strong requirement. If subtitles are created and exchanged in TTML but subsequently translated back into legacy formats, then this will suppress exact that information from the TTML document, for which TTML was invented for in the first place.

If, on the other hand, the opportunity will be used, it may be possible to close the last part to realize a complete XML Subtitle workflow.

TTML in Distribution

Distribution describes the last mile to bring broadcast content to the consumer device. In Europe the container format for linear digital broadcast content that is distributed over the air, via satellite or cable is the MPEG transport stream. Subtitles are multiplexed into this container format.

For Internet distribution, subtitles are either provided as a separate file or also multiplexed in a media container format. One of the most popular container formats for subtitles for Internet distribution is the MP4 format, which is based on the ISO base media file format (ISOBMFF).

For the transport of the linear broadcast program over the Internet, HTTP-based streaming technologies are used. Two of these technologies are the Apple-defined HTTP live streaming (HLS) [HLS] and Dynamic Adaptive Streaming over HTTP (DASH) [MPEGDASH], specified by the Moving Picture Experts Group (MPEG).

Status Quo

For linear digital TV, there is currently no standardized solution to add subtitles in XML to the MPEG transport stream. There are only standards to package teletext or bitmap subtitles in the transport stream.

For Internet distribution, TTML has already been in use for a long time. TTML documents are usually offered as separate HTTP downloads for Web video players.

For the MP4 container format, several specifications define how to package TTML in the MP4 container [TTML-IN-ISOBMFF][EBU3381]. These specified mechanisms are referenced by profiles of the MPEG DASH streaming technology and also specifications for Hybrid-TV like HbbTV 2.0 [HBBTV2] .[7]

Challenges

Although the use of TTML for internet subtitles is well established, the history of use is also a challenge. The adoption of TTML started with DFXP as pre-final candidate recommendation and quickly gained popularity. Unfortunately, the final TTML 1 version specification changed not only the name of the format from “Timed Text (TT) Authoring Format 1.0 – Distribution Format Exchange Profile (DFXP)” to “Timed Text Markup Language 1 (TTML1)” but also replaced XML namespaces (the local names of nearly all elements and attributes remained the same). This led not only to confusion (the older version is often not associated with TTML because of the different spec title) but is also an interoperability problem [8]. Although two simple XSLT scripts exist that replace namespaces in both directions out of the box[9], an XML-compliant TTML 1 player is not able to process DFXP files and an XML-compliant DFXP player is not able to process TTML 1 files.

Another challenge is the packaging of TTML in media container formats like MP4. Although the process is specified, XML namespaces in particular cause problems. The MP4Box packager provided by the open-source project GPAC was the first implementation that made use of TTML packaging in MP4 [MP4BOX][GPAC-SUB]. But in the first version, it only supported TTML documents where the default namespace was set and no prefixes are used for the TTML elements. In a revised version, it now allows prefixes for TTML elements and TTML attributes, but only when the same prefixes are used as in the TTML 1 spec [GPAC-EBU].

Opportunities

Because specifications for connected TVs like HbbTV 2.0 already mandate TTML as subtitle format, the Internet distribution of TTML subtitles may lead also to a broadcast distribution of TTML. The text-based character of TTML is a further advantage that makes it very attractive for the distribution over air, cable, or satellite. Although TTML subtitles may have a higher data rate than legacy teletext subtitles, they have a considerable lower data rate then bitmap subtitles which are currently used for high-quality broadcast subtitles. This data-rate advantage gets particulary significant in view of bandwidth limitations.

TTML as Subtitle Presentation Format

The number of different terminal device types on which broadcast content can be consumed keeps growing continuously. TV programs are watched on smart-phones, tablets, laptops, workstations and large HD and UHD panels.

Therefore, interoperable subtitle presentation across different devices and platforms is crucial. This is not only a task for manufacturers but also for content service providers. In Web environments, they do not depend on native subtitle rendering by the consumer device but often implement their own Web player with Web technologies like HTML, CSS, and Javascript.

Status Quo

There are numerous Web player products that support TTML to render Internet subtitles. Some of them are freely available, but the majority is implemented directly by broadcasters and content service providers. One well-known player product that supports TTML is iPlayer by the BBC.

Some of these Web players still support only the DFXP versions of TTML, but others already use the stable TTML 1 version. Like other broadcasters in Europe, the HBB4ALL project makes strong use of the EBU-TT-D TTML profile for the presentation of subtitles. Open Source projects like the Timed Text Toolkit and the EBU-TT-D Application Samples are available to help implementations to reach standard conformance[10].

Although no native TTML support is provided by Web browsers, it will be present in TV devices. The published HbbTV 2.0 standard already mandates the support of TTML, and so does the upcoming ATSC 3.0 standard [ATSC3].

Challenges

To get a glimpse of the challenges of using TTML as a subtitle presentation format, it is helpful to look at current open-source implementations of TTML decoder. One of the most advanced implementations is the TTML parser by Solène Buet.[11]It was implemented in Javascript and merged into the very widespread MPEG DASH player DASH.js.[12]

The implementation shows that by transforming TTML first into JSON, the XML ecosystem is left right at the beginning. After the data is available in JSON it is translated into HTML/CSS semantics and inserted into the shadow DOM of the Web browser.

In this approach, it is worth noting that no XML technologies are used and that the styling and layout model of TTML needed to be migrated to CSS.

This pattern can also be noticed in other player implementations. It can be explained with the limited XML and missing TTML support of Web browsers. The questions are therefore, whether HTML subtitles would be the better format to be provided to browser-based video players, or whether there is a better way to exploit the remaining XML capabilities of browsers.

Opportunities

The presentation of TTML subtitles for Internet video content could be the driver to bring TTML into the complete broadcast production chain.

It is important to point out that although TVs use HTML standards, they do not depend on them. The main TV standards are not published by W3C but by industry-specific standards developing organizations (SDO) like the Society of Motion Picture and Television Engineers (SMPTE), the Digital Video Broadcasting Project (DVB), the European Broadcasting Union (EBU), the Advanced Television Systems Committee (ATSC), and the HbbTV Association. All of these organizations have adopted TTML in one form or another. While XML may be regarded as legacy technology in the browser-based Web world, it advances to the front end in other environments.

Summary

By looking at the different stages of the workflows for broadcast subtitles, it was shown that XML subtitles have arrived in most parts of the chain. Obviously, the adoption is most advanced at the end where devices have been using TTML for a long time already.

It was also noted that in parts of the information flow, TTML needs to be translated back into legacy formats because media containers for video transport like SDI are not TTML-ready yet. To use the benefits of the new markup language from end to end, it is very important to start with a standard initiative to close these gaps.

Where TTML is already implemented, the benefits to use XML technologies like XML Schema, XSLT, and XQuery were demonstrated. On the other hand, this is mostly limited to software which specializes in handling TTML XML. The use of XML technologies in other established broadcast systems remains a challenge.

It can be expected that the use of TTML will be growing in all parts of the TV production chain. This is a signal not only for the broadcast community but also for the XML community. Both sides could learn from each other and also support each other to guarantee the maintenance and further development of mature technologies.

References

[ATSC3] Advanced Television Systems Committee (ATSC), ATSC Candidate Standard: Captions and Subtitles (A/343), Doc. S34 - 169r3, 23 December 2015. http://atsc.org/wp-content/uploads/2015/12/S34-169r3-Captions-and-Subtitles.pdf

[GPAC-EBU] Bouqueau, Romain, EBU-TTD support in GPAC. https://gpac.wp.mines-telecom.fr/2014/08/23/ebu-ttd-support-in-gpac/

[GPAC-SUB] Concolato, Cyrill, Subtitling with GPAC. https://gpac.wp.mines-telecom.fr/2014/09/04/subtitling-with-gpac/

[MPEGDASH] Dynamic adaptive streaming over HTTP (DASH) – Part 1: Media presentation description and segment formats, ISO/IEC 23009-1:2014.

[EBU3264] European Broadcasting Union (EBU), EBU Tech 3264, Specification of the EBU Subtitling data exchange format, February 1991. http://tech.ebu.ch/docs/tech/tech3264.pdf

[EBU3350] European Broadcasting Union (EBU), EBU Tech 3350, EBU-TT Part 1 Subtitling format definition, Version 1.0, July 2012. http://tech.ebu.ch/docs/tech/tech3350.pdf?vers=1.0

[EBU3380] European Broadcasting Union (EBU), EBU Tech 3380, EBU-TT-D Subtitling Distribution Format, Version 1.0, March 2015. https://tech.ebu.ch/docs/tech/tech3380.pdf

[EBU3381] European Broadcasting Union (EBU), EBU Tech 3381, Carriage of EBU-TT-D in ISOBMFF, Version 1.0, November 2014. https://tech.ebu.ch/publications/tech3381

[GOERNER2009] Görner, Larissa, Zusatzdienste bei HDTV, Diplomarbeit, Hochschule München, 2009.

[HBB4ALL] HBB4ALL, Hybrid Broadcast Broadband for All, project Web site. http://www.hbb4all.eu/

[HBB4ALLD3-2] HBB4ALL, Hybrid Broadcast Broadband for All, D3.2 – Pilot-A Solution Integration and Trials. http://www.hbb4all.eu/wp-content/uploads/2015/03/D3.2-Pilot-A-Solution-Integration-and-Trials-2015.pdf

[HBBTV2] HbbTV Association, HbbTV 2.0.1 Specification. http://www.hbbtv.org/wp-content/uploads/2015/07/HbbTV-SPEC20-00023-001-HbbTV_2.0.1_specification_for_publication_clean.pdf

[HLS] HTTP Live Streaming, project page, https://developer.apple.com/streaming/

[MP4BOX] MP4Box, GPAC, Multimedia Open Source Projekt, project Web site. https://gpac.wp.mines-telecom.fr/mp4box/

[OxygenEBU] oXygen framework for EBU-TT, https://github.com/oxygenxml/ebu-tt

[DFXP] Timed Text (TT) Authoring Format 1.0 – Distribution Format Exchange Profile (DFXP), Glenn Adams (ed.), W3C Candidate Recommendation 24 September 2009. https://www.w3.org/TR/2009/CR-ttaf1-dfxp-20090924/

[TTML1] Timed Text Markup Language 1 (TTML1)(Second Edition), Glenn Adams (ed.), W3C Recommendation 24 September 2013. https://www.w3.org/TR/2013/REC-ttml1-20130924/

[TTML-IN-ISOBMFF] Timed Text and Other Visual Overlays in ISO Base Media File Format, ISO/IEC CD 14496-30. http://mpeg.chiariglione.org/standards/mpeg-4/timed-text-and-other-visual-overlays-iso-base-media-file-format/text-isoiec-cd

[SCF] SCF, IRT-Open-Source. https://github.com/IRT-Open-Source/scf

[WebVTT] WebVTT: The Web Video Text Tracks Format, W3C Draft Community Group Report, Simon Pieters (ed.), Silvia Pfeiffer, Philip Jägenstedt, Ian Hickson (former ed.). https://w3c.github.io/webvtt/

[1] For ease of reading, only the term “subtitles” is used in this article and the term “captions” may be used interchangeably for the term “subtitles.”

[2] Because of this context, some results are limited to broadcast and subtitling technologies primarily used in Europe.

[3] All TTML XML samples assume a parent element where the default namespace is

set to http://www.w3.org/ns/ttml. Prefixes are bound to namespaces

as documented in TTML1.

[4] Cf. [HBB4ALLD3-2], page 114.

[5] Cf. https://github.com/IRT-Open-Source/scf/tree/master/modules/STLXML2STL

[6] Regarding insertion of subtitle data in SDI, cf. [GOERNER2009].

[7] Hybrid-TV (also known as connected TV) describes the capability of TV sets to access resources via the Internet (e.g. to combine them with content that is delivered over linear broadcast distribution).

[8] It is debatable how to judge early implementations of a W3C Candidate Recommendation. You may argue that specs should not be implemented until they reach Recommendation status. The important point is that the namespace change together with early adoption of the unfinished standard complicated the adoption of TTML.

[9] Cf. https://github.com/IRT-Open-Source/scf/tree/master/modules/TT-Helper/DFXP2TTML and https://github.com/IRT-Open-Source/scf/tree/master/modules/TT-Helper/TTML2DFXP

[10] Cf. https://github.com/skynav/ttt and https://github.com/IRT-Open-Source/irt-ebu-tt-d-application-samples